What measures can Australia take to ensure children’s protection from pornography in the realm of big tech?

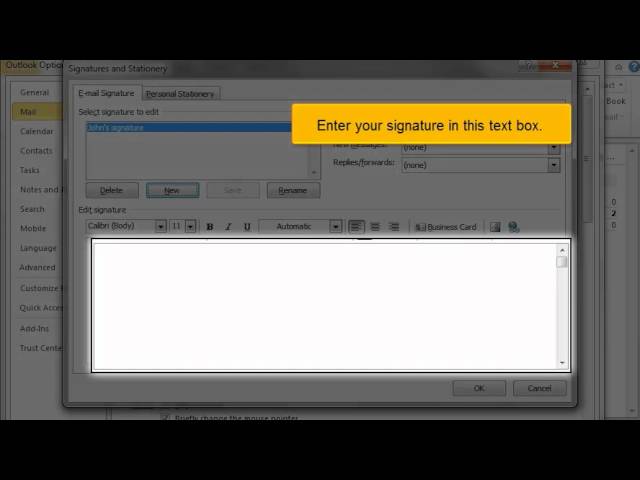

The eSafety Commissioner of Australia has given major tech platforms a six-month deadline to develop enforceable codes for protecting children from online pornography and other harmful content. The average age at which children encounter pornography online is 13, according to the commissioner’s research. Age verification is a challenging problem, especially for adult access to pornographic content. The most reliable methods currently are government-issued identities, but few people would be comfortable revealing their porn habits to the government. The commissioner envisions codes that cover the entire online ecosystem, including apps, websites, search engines, and service providers. Measures being considered include default Safe Search features on search engines and improved parental controls. Age assurance technology is also being explored, but existing technologies are unreliable and raise privacy concerns. The discussion paper suggests a holistic approach involving collaboration between smartphone manufacturers, app store managers, and online platforms. Potential safety measures include default child safety features on smartphones and automatic scanning of images for nudity or high-impact content. However, no detection system is perfect, and platforms will need to balance parental control with a child’s right to access educational materials. Implementing these measures will require tech firms to work together, but whether it can be achieved within six months is uncertain.