We’ve been having conversations for thousands of years. Whether to convey information, conduct transactions, or simply to check in on one another, people have yammered away, chattering and gesticulating, through spoken conversation for countless generations. Only in the last few millennia have we begun to commit our conversations to writing, and only in the last few decades have we begun to outsource them to the computer, a machine that shows much more affinity for written correspondence than for the slangy vagaries of spoken language.

Computers have trouble because between spoken and written language, speech is more primordial. To have successful conversations with us, machines must grapple with the messiness of human speech: the disfluencies and pauses, the gestures and body language, and the variations in word choice and spoken dialect that can stymie even the most carefully crafted human-computer interaction. In the human-to-human scenario, spoken language also has the privilege of face-to-face contact, where we can readily interpret nonverbal social cues.

In contrast, written language immediately concretizes as we commit it to record and retains usages long after they become obsolete in spoken communication (the salutation “To whom it may concern,” for example), generating its own fossil record of outdated terms and phrases. Because it tends to be more consistent, polished, and formal, written text is fundamentally much easier for machines to parse and understand.

Spoken language has no such luxury. Besides the nonverbal cues that decorate conversations with emphasis and emotional context, there are also verbal cues and vocal behaviors that modulate conversation in nuanced ways: how something is said, not what. Whether rapid-fire, low-pitched, or high-decibel, whether sarcastic, stilted, or sighing, our spoken language conveys much more than the written word could ever muster. So when it comes to voice interfaces—the machines we conduct spoken conversations with—we face exciting challenges as designers and content strategists.

Voice Interactions

We interact with voice interfaces for a variety of reasons, but according to Michael McTear, Zoraida Callejas, and David Griol in The Conversational Interface, those motivations by and large mirror the reasons we initiate conversations with other people, too (http://bkaprt.com/vcu36/01-01). Generally, we start up a conversation because:

- we need something done (such as a transaction),

- we want to know something (information of some sort), or

- we are social beings and want someone to talk to (conversation for conversation’s sake).

These three categories—which I call transactional, informational, and prosocial—also characterize essentially every voice interaction: a single conversation from beginning to end that realizes some outcome for the user, starting with the voice interface’s first greeting and ending with the user exiting the interface. Note here that a conversation in our human sense—a chat between people that leads to some result and lasts an arbitrary length of time—could encompass multiple transactional, informational, and prosocial voice interactions in succession. In other words, a voice interaction is a conversation, but a conversation is not necessarily a single voice interaction.

Purely prosocial conversations are more gimmicky than captivating in most voice interfaces, because machines don’t yet have the capacity to really want to know how we’re doing and to do the sort of glad-handing humans crave. There’s also ongoing debate as to whether users actually prefer the sort of organic human conversation that begins with a prosocial voice interaction and shifts seamlessly into other types. In fact, in Voice User Interface Design, Michael Cohen, James Giangola, and Jennifer Balogh recommend sticking to users’ expectations by mimicking how they interact with other voice interfaces rather than trying too hard to be human—potentially alienating them in the process (http://bkaprt.com/vcu36/01-01).

That leaves two genres of conversations we can have with one another that a voice interface can easily have with us, too: a transactional voice interaction realizing some outcome (“buy iced tea”) and an informational voice interaction teaching us something new (“discuss a musical”).

Transactional voice interactions

Unless you’re tapping buttons on a food delivery app, you’re generally having a conversation—and therefore a voice interaction—when you order a Hawaiian pizza with extra pineapple. Even when we walk up to the counter and place an order, the conversation quickly pivots from an initial smattering of neighborly small talk to the real mission at hand: ordering a pizza (generously topped with pineapple, as it should be).

Alison: Hey, how’s it going?

Burhan: Hi, welcome to Crust Deluxe! It’s cold out there. How can I help you?

Alison: Can I get a Hawaiian pizza with extra pineapple?

Burhan: Sure, what size?

Alison: Large.

Burhan: Anything else?

Alison: No thanks, that’s it.

Burhan: Something to drink?

Alison: I’ll have a bottle of Coke.

Burhan: You got it. That’ll be $13.55 and about fifteen minutes.

Each progressive disclosure in this transactional conversation reveals more and more of the desired outcome of the transaction: a service rendered or a product delivered. Transactional conversations have certain key traits: they’re direct, to the point, and economical. They quickly dispense with pleasantries.

Informational voice interactions

Meanwhile, some conversations are primarily about obtaining information. Though Alison might visit Crust Deluxe with the sole purpose of placing an order, she might not actually want to walk out with a pizza at all. She might be just as interested in whether they serve halal or kosher dishes, gluten-free options, or something else. Here, though we again have a prosocial mini-conversation at the beginning to establish politeness, we’re after much more.

Alison: Hey, how’s it going?

Burhan: Hi, welcome to Crust Deluxe! It’s cold out there. How can I help you?

Alison: Can I ask a few questions?

Burhan: Of course! Go right ahead.

Alison: Do you have any halal options on the menu?

Burhan: Absolutely! We can make any pie halal by request. We also have lots of vegetarian, ovo-lacto, and vegan options. Are you thinking about any other dietary restrictions?

Alison: What about gluten-free pizzas?

Burhan: We can definitely do a gluten-free crust for you, no problem, for both our deep-dish and thin-crust pizzas. Anything else I can answer for you?

Alison: That’s it for now. Good to know. Thanks!

Burhan: Anytime, come back soon!

This is a very different dialogue. Here, the goal is to get a certain set of facts. Informational conversations are investigative quests for the truth—research expeditions to gather data, news, or facts. Voice interactions that are informational might be more long-winded than transactional conversations by necessity. Responses tend to be lengthier, more informative, and carefully communicated so the customer understands the key takeaways.

Voice Interfaces

At their core, voice interfaces employ speech to support users in reaching their goals. But simply because an interface has a voice component doesn’t mean that every user interaction with it is mediated through voice. Because multimodal voice interfaces can lean on visual components like screens as crutches, we’re most concerned in this book with pure voice interfaces, which depend entirely on spoken conversation, lack any visual component whatsoever, and are therefore much more nuanced and challenging to tackle.

Though voice interfaces have long been integral to the imagined future of humanity in science fiction, only recently have those lofty visions become fully realized in genuine voice interfaces.

Interactive voice response (IVR) systems

Though written conversational interfaces have been fixtures of computing for many decades, voice interfaces first emerged in the early 1990s with text-to-speech (TTS) dictation programs that recited written text aloud, as well as speech-enabled in-car systems that gave directions to a user-provided address. With the advent of interactive voice response (IVR) systems, intended as an alternative to overburdened customer service representatives, we became acquainted with the first true voice interfaces that engaged in authentic conversation.

IVR systems allowed organizations to reduce their reliance on call centers but soon became notorious for their clunkiness. Commonplace in the corporate world, these systems were primarily designed as metaphorical switchboards to guide customers to a real phone agent (“Say Reservations to book a flight or check an itinerary”); chances are you will enter a conversation with one when you call an airline or hotel conglomerate. Despite their functional issues and users’ frustration with their inability to speak to an actual human right away, IVR systems proliferated in the early 1990s across a variety of industries (http://bkaprt.com/vcu36/01-02, PDF).

While IVR systems are great for highly repetitive, monotonous conversations that generally don’t veer from a single format, they have a reputation for less scintillating conversation than we’re used to in real life (or even in science fiction).

Screen readers

Parallel to the evolution of IVR systems was the invention of the screen reader, a tool that transcribes visual content into synthesized speech. For Blind or visually impaired website users, it’s the predominant method of interacting with text, multimedia, or form elements. Screen readers represent perhaps the closest equivalent we have today to an out-of-the-box implementation of content delivered through voice.

Among the first screen readers known by that moniker was the Screen Reader for the BBC Micro and NEEC Portable developed by the Research Centre for the Education of the Visually Handicapped (RCEVH) at the University of Birmingham in 1986 (http://bkaprt.com/vcu36/01-03). That same year, Jim Thatcher created the first IBM Screen Reader for text-based computers, later recreated for computers with graphical user interfaces (GUIs) (http://bkaprt.com/vcu36/01-04).

With the rapid growth of the web in the 1990s, the demand for accessible tools for websites exploded. Thanks to the introduction of semantic HTML and especially ARIA roles beginning in 2008, screen readers started facilitating speedy interactions with web pages that ostensibly allow disabled users to traverse the page as an aural and temporal space rather than a visual and physical one. In other words, screen readers for the web “provide mechanisms that translate visual design constructs—proximity, proportion, etc.—into useful information,” writes Aaron Gustafson in A List Apart. “At least they do when documents are authored thoughtfully” (http://bkaprt.com/vcu36/01-05).

Though deeply instructive for voice interface designers, there’s one significant problem with screen readers: they’re difficult to use and unremittingly verbose. The visual structures of websites and web navigation don’t translate well to screen readers, sometimes resulting in unwieldy pronouncements that name every manipulable HTML element and announce every formatting change. For many screen reader users, working with web-based interfaces exacts a cognitive toll.

In Wired, accessibility advocate and voice engineer Chris Maury considers why the screen reader experience is ill-suited to users relying on voice:

From the beginning, I hated the way that Screen Readers work. Why are they designed the way they are? It makes no sense to present information visually and then, and only then, translate that into audio. All of the time and energy that goes into creating the perfect user experience for an app is wasted, or even worse, adversely impacting the experience for blind users. (http://bkaprt.com/vcu36/01-06)

In many cases, well-designed voice interfaces can speed users to their destination better than long-winded screen reader monologues. After all, visual interface users have the benefit of darting around the viewport freely to find information, ignoring areas irrelevant to them. Blind users, meanwhile, are obligated to listen to every utterance synthesized into speech and therefore prize brevity and efficiency. Disabled users who have long had no choice but to employ clunky screen readers may find that voice interfaces, particularly more modern voice assistants, offer a more streamlined experience.

Voice assistants

When we think of voice assistants (the subset of voice interfaces now commonplace in living rooms, smart homes, and offices), many of us immediately picture HAL from 2001: A Space Odyssey or hear Majel Barrett’s voice as the omniscient computer in Star Trek. Voice assistants are akin to personal concierges that can answer questions, schedule appointments, conduct searches, and perform other common day-to-day tasks. And they’re rapidly gaining more attention from accessibility advocates for their assistive potential.

Before the earliest IVR systems found success in the enterprise, Apple published a demonstration video in 1987 depicting the Knowledge Navigator, a voice assistant that could transcribe spoken words and recognize human speech to a great degree of accuracy. Then, in 2001, Tim Berners-Lee and others formulated their vision for a Semantic Web “agent” that would perform typical errands like “checking calendars, making appointments, and finding locations” (http://bkaprt.com/vcu36/01-07, behind paywall). It wasn’t until 2011 that Apple’s Siri finally entered the picture, making voice assistants a tangible reality for consumers.

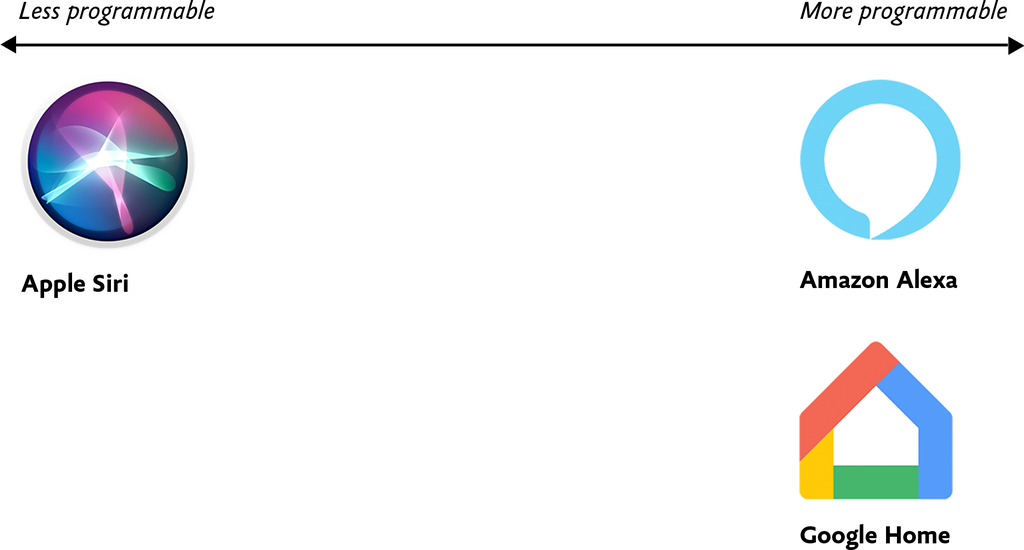

Thanks to the plethora of voice assistants available today, there is considerable variation in how programmable and customizable certain voice assistants are over others (Fig 1.1). At one extreme, everything except vendor-provided features is locked down; for example, at the time of their release, the core functionality of Apple’s Siri and Microsoft’s Cortana couldn’t be extended beyond their existing capabilities. Even today, it isn’t possible to program Siri to perform arbitrary functions, because there’s no means by which developers can interact with Siri at a low level, apart from predefined categories of tasks like sending messages, hailing rideshares, making restaurant reservations, and certain others.

At the opposite end of the spectrum, voice assistants like Amazon Alexa and Google Home offer a core foundation on which developers can build custom voice interfaces. For this reason, programmable voice assistants that lend themselves to customization and extensibility are becoming increasingly popular for developers who feel stifled by the limitations of Siri and Cortana. Amazon offers the Alexa Skills Kit, a developer framework for building custom voice interfaces for Amazon Alexa, while Google Home offers the ability to program arbitrary Google Assistant skills. Today, users can choose from among thousands of custom-built skills within both the Amazon Alexa and Google Assistant ecosystems.

As corporations like Amazon, Apple, Microsoft, and Google continue to stake their territory, they’re also selling and open-sourcing an unprecedented array of tools and frameworks for designers and developers that aim to make building voice interfaces as easy as possible, even without code.

Often by necessity, voice assistants like Amazon Alexa tend to be monochannel—they’re tightly coupled to a device and can’t be accessed on a computer or smartphone instead. By contrast, many development platforms like Google’s Dialogflow have introduced omnichannel capabilities so users can build a single conversational interface that then manifests as a voice interface, textual chatbot, and IVR system upon deployment. I don’t prescribe any specific implementation approaches in this design-focused book, but in Chapter 4 we’ll get into some of the implications these variables might have on the way you build out your design artifacts.

Voice Content

Simply put, voice content is content delivered through voice. To preserve what makes human conversation so compelling in the first place, voice content needs to be free-flowing and organic, contextless and concise—everything written content isn’t.

Our world is replete with voice content in various forms: screen readers reciting website content, voice assistants rattling off a weather forecast, and automated phone hotline responses governed by IVR systems. In this book, we’re most concerned with content delivered auditorily—not as an option, but as a necessity.

For many of us, our first foray into informational voice interfaces will be to deliver content to users. There’s only one problem: any content we already have isn’t in any way ready for this new habitat. So how do we make the content trapped on our websites more conversational? And how do we write new copy that lends itself to voice interactions?

Lately, we’ve begun slicing and dicing our content in unprecedented ways. Websites are, in many respects, colossal vaults of what I call macrocontent: lengthy prose that can extend for infinitely scrollable miles in a browser window, like microfilm viewers of newspaper archives. Back in 2002, well before the present-day ubiquity of voice assistants, technologist Anil Dash defined microcontent as permalinked pieces of content that stay legible regardless of environment, such as email or text messages:

A day’s weather forcast [sic], the arrival and departure times for an airplane flight, an abstract from a long publication, or a single instant message can all be examples of microcontent. (http://bkaprt.com/vcu36/01-08)

I’d update Dash’s definition of microcontent to include all examples of bite-sized content that go well beyond written communiqués. After all, today we encounter microcontent in interfaces where a small snippet of copy is displayed alone, unmoored from the browser, like a textbot confirmation of a restaurant reservation. Microcontent offers the best opportunity to gauge how your content can be stretched to the very edges of its capabilities, informing delivery channels both established and novel.

As microcontent, voice content is unique because it’s an example of how content is experienced in time rather than in space. We can glance at a digital sign underground for an instant and know when the next train is arriving, but voice interfaces hold our attention captive for periods of time that we can’t easily escape or skip, something screen reader users are all too familiar with.

Because microcontent is fundamentally made up of isolated blobs with no relation to the channels where they’ll eventually end up, we need to ensure that our microcontent truly performs well as voice content—and that means focusing on the two most important traits of robust voice content: voice content legibility and voice content discoverability.

Fundamentally, the legibility and discoverability of our voice content both have to do with how voice content manifests in perceived time and space.